Desirable, Possible, Probable, Plausible, Impossible

Why the language of Foresight is far less stable than we pretend

One of the quiet illusions inside Foresight is that the words we use are more stable than the futures we are trying to explore.

We say possible, plausible, probable, trend, uncertainty, wildcard, weak signal, as if these terms came with edges. As if they pointed to clearly bounded territories. As if, once spoken, they organized reality in a way that others would understand more or less as we do.

But they do not.

And that matters more than it seems.

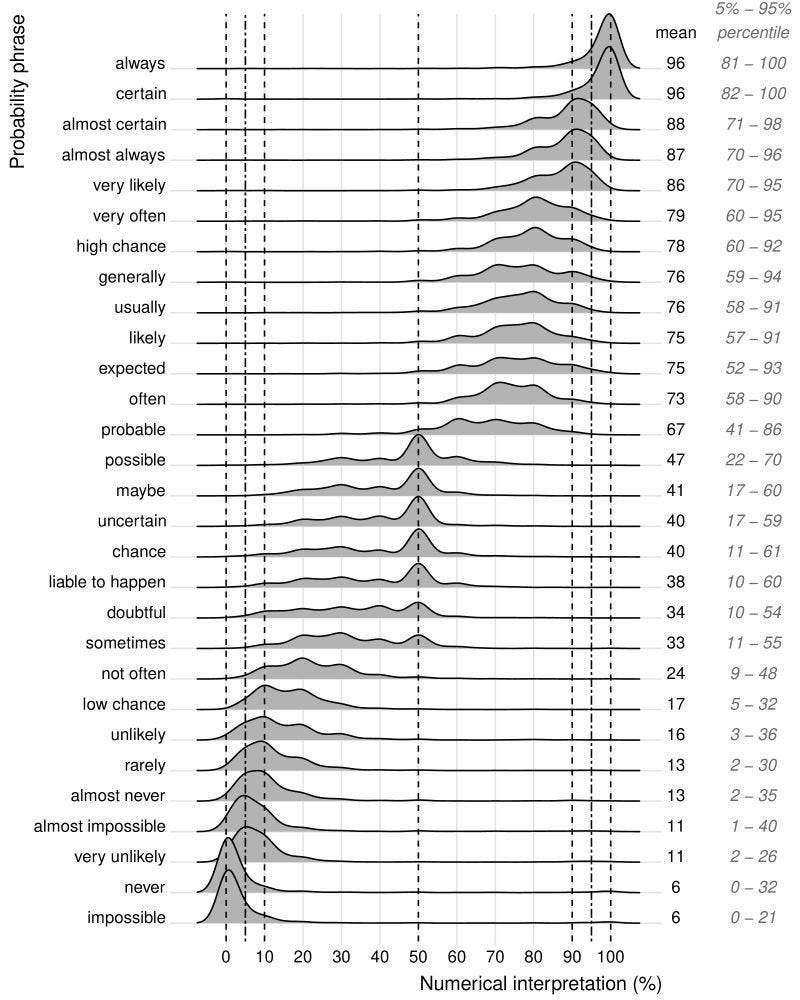

Thomas D’hooge shared a study published in the Journal of Science Communication that I believe is an unexpectedly(?) useful provocation for anyone working in Foresight. Looking at how Dutch speakers interpreted verbal probability terms, the researchers found something both intuitive and unsettling: there is more agreement around the extremes, but much less once we move into the middle of the spectrum. Terms such as always, certain, never, and impossible remain relatively clustered. But terms such as possible, maybe, uncertain, probable, or likely begin to drift. Their meanings spread out. Their interpretation becomes less shared, less stable, less precise.

Figure 1: Variability in the interpretation of probability phrases used in Dutch news articles, Willems, Albers and Smeets (2020).

This is not a trivial semantic issue.

It goes to the heart of how we make sense of the future.

The ambiguity is not only in Futures Thinking. It is also in the language we use to approach it.

That is why I find this “verbal probability terms” approach so interesting and so useful. Not because the numerical interpretation proposed by the study is necessarily the correct one. It is not that the word likely secretly means 75% and that we can now all proceed with new confidence. That would miss the point entirely.

Its value lies elsewhere.

What the study does brilliantly is make visible something that is usually left implicit: the probabilities we tend to associate, often without saying so, with the categories and expressions we use in Foresight.

And once you see that, a great deal becomes easier to explain.

Foresight is full of probability language, even when it does not look like it

When we conduct a Foresight project, especially in the Scanning phase, we usually try to identify different types of driving forces and signals. Megatrends. Trends. Weak signals or early warnings. Wildcards or black swans. Critical uncertainties.

We often speak as if these were simply different kinds of phenomena waiting to be objectively identified and placed into the right analytical drawer.

But that is never really what is happening.

When we classify something as a megatrend, we are not merely describing it. We are also making a judgment about persistence, visibility, scope, maturity, and confidence. When we call something a trend, we are suggesting a certain degree of pattern and traction. When we describe something as a weak signal, we are implicitly recognizing ambiguity, low visibility, and emergence. When we frame something as a wildcard or black swan, we are usually placing it in the territory of low perceived probability but potentially high impact. When we speak of a critical uncertainty, we are saying something else again: not necessarily improbable, but unresolved enough to remain structurally open.

These are not just descriptive labels.

They are judgments about the shape and status of change.

Which means they are also, in a deeper sense, probability-laden labels.

Possible, plausible, probable: these are not neutral descriptors. They are judgments disguised as categories.

This is precisely why the study is so useful for Foresight. Not because it gives us a formula, but because it reveals how unstable the very language of likelihood can be, especially in the middle ranges where so much of strategic interpretation actually happens.

The middle is where the trouble begins

The extremes are relatively easy.

“Impossible” tends to signal something close to zero. “Certain” tends to signal something near complete confidence. “Never” and “always” still retain a stronger degree of shared meaning, even if not perfectly.

But the middle is where interpretation starts to fray.

And the middle is exactly where much of Foresight lives.

The language of Foresight is rarely the language of certainty. It is the language of emergence, ambiguity, conditionality, and strategic interpretation. We work with developments that are not yet fully formed, with shifts whose evidence is uneven, with early traces that may or may not become more consequential, with competing dynamics that may reinforce or cancel each other out. In that territory, words such as possible, likely, uncertain, plausible, or emerging carry enormous weight.

But they do not carry shared precision.

That is why so many workshop discussions circle around what appear, on the surface, to be classification issues but are actually interpretation issues.

Is this really a trend, or is it still only a weak signal?

Is this a genuine uncertainty, or simply a poorly evidenced development?

Is this so marginal that it should remain peripheral, or is it precisely the kind of weak early warning that deserves more attention?

Is this a wildcard, or merely an improbable continuation of an already visible trajectory?

These are not technical sorting problems alone.

They are problems of collective sense-making.

What experienced practitioners know, but often struggle to explain

Most experienced Foresight practitioners know this already.

They know that the categories are useful, but not clean. They know that the boundaries are porous. They know that the same development may be interpreted differently depending on the time horizon, the focal issue, the system boundary, the geography, the sector, or the strategic question at stake. They know that one person’s trend may be another person’s uncertainty, and that what appears marginal today may, in hindsight, become the early visible trace of something much bigger.

But this is often difficult to explain to participants, stakeholders, and decision makers.

Especially at the beginning of a project, many people expect the process to produce firm labels. They want clarity in the form of stable classification. They want to know what counts as a “real trend,” what belongs in the category of uncertainty, what is too unlikely to matter, and what should be taken seriously.

That desire is understandable.

But it rests on a misunderstanding of what good Foresight actually does.

Good Foresight is not a machine for turning ambiguity into false objectivity. It is a disciplined way of working with ambiguity without collapsing into vagueness.

Foresight categories do not eliminate ambiguity. They help us work through it.

The study on verbal probability terms helps make this easier to communicate. It shows that instability does not begin only when we classify external developments. It begins one layer earlier, in the very language through which we express judgments of likelihood, confidence, and expectation.

In other words, the fuzziness is not merely “out there” in the world.

It is also in here, in our words.

From fixed boxes to a Spectrum

This is why I think it is more accurate to treat the different types of forces and signals used in Foresight as positions on a spectrum rather than as rigid and objective sections.

That does not mean the categories are useless. On the contrary, they are essential. Without them, scanning would become an undifferentiated mass of observations. But their usefulness depends on using them in the right way.

A Megatrend is not a box. A Weak Signal is not a box. A Wildcard is not a box. A Critical Uncertainty is not a box.

They are interpretive positions.

They indicate how a team, at a given moment, in a given context, is making sense of a development in relation to evidence, momentum, ambiguity, and strategic relevance. One can imagine a broad spectrum: from highly visible, well-evidenced, widely recognized, system-shaping developments, through more emergent and contested patterns, toward faint signals, discontinuities, and low-probability high-impact possibilities. But the point is not to freeze those positions forever. The point is to use them as part of a living process of scanning and interpretation.

Because the same force may move or evolve.

And so may our reading of it.

A development that begins as a faint Weak Signal may later consolidate into a clear Trend. A perceived Trend may dissolve. A supposed Uncertainty may become increasingly (uni)directional. A marginal possibility may be repositioned as strategically central due to a change in context.

The map changes. But so do the interpreters.

Classification is never only analytical

In the end, the way we categorize or classify driving forces and signals in a strategic Foresight project is almost always a process of co-creation and negotiation.

That is not a weakness of the method. It is part of its intelligence and richness.

Project teams bring different lenses. Experts bring domain-specific knowledge. Stakeholders bring positional realities. Decision makers bring strategic priorities and institutional constraints. Together, they do not merely “find” the correct category for a force. They interact around it. They test interpretations. They negotiate meaning. They decide what deserves central attention, what remains peripheral, what is mature enough to be treated as a trend, what is unstable enough to remain an uncertainty, and what is disruptive enough to be treated as a wildcard or black swan.

This process is not arbitrary. It is disciplined, but it is still interpretive.

That distinction matters.

Because when people imagine that classification in Foresight should be purely objective, they often misunderstand where the real value lies. The value is not in pretending that every category is self-evident. The value lies in making the assumptions behind the categorisation discussable.

Why are we calling this a Trend?

What evidence supports that?

What would lead us to reclassify it?

Over what time horizon does this interpretation hold?

For whom does this look probable, and for whom does it still look uncertain?

These are better questions than the search for false categorical purity.

The boundary between Trends, Weak Signals, Wildcards, and Uncertainties in many situations is not discovered. More often, it is negotiated.

A more mature use of language in Foresight

The practical implication is not that we should abandon verbal categories. Nor should we try to translate everything into numbers. That would strip Foresight of much of its interpretive richness.

The real implication is that we should use language with greater plasticity and reflexivity.

We should be more aware that words such as possible, plausible, probable, likely, or uncertain are not automatically shared in the way we imagine. We should make assumptions more explicit. We should allow room for participants to surface differences in interpretation rather than hiding them beneath apparently familiar terminology. And we should treat classification not as a final truth claim, but as a provisional strategic positioning.

This makes Foresight stronger, not weaker.

Because the purpose of Foresight is not to simulate certainty. It is to expand perception, deepen interpretation, and improve action under conditions where certainty is unavailable.

The goal of Foresight is not to create artificial certainty. It is to improve how people interpret and act under uncertainty.

That is why this kind of study matters.

It reminds us that the challenge is not only to understand the future better. It is also to understand the instability of the language through which we attempt to understand it.

And that is sometimes one of the overlooked disciplines in serious Foresight work.

Closing remarks

The future does not arrive with labels attached.

We create those labels so that we can think together, decide together, and act with greater awareness.

But once we recognize how unstable even basic verbal probability terms can be, we also recognize something deeper: that Foresight is never simply about identifying signals and classifying forces. It is about building shared meaning in conditions where shared meaning cannot be assumed in advance.

That is not a limitation of Foresight.

It is one of the reasons it matters.

Source: Willems, S., Albers, C. J., & Smeets, I. (2020). How do people interpret verbal probability expressions? Journal of Science Communication, 19(2), A03.